How Oracle is building systems with AI to manage global databases

We are now moving into an era where there is so much data that it is becoming into a challenge. A smartphone in the pocket represents a Terabyte of data. According to Phil Dunn, Director of Infrastructure Technology EMEA, Oracle, there is now so much of data, that in order to get any meaningful insights from that data, there is a need to evolve considerably, not only in terms of hardware, but in terms of intelligence as well. And that means analytics and the end to end insights of data.

“Today data is the new oil. What separates one company from another is the data they have and the end to end insights they can get out of that data,” explains Dunn. “The data strategy of a company is critical to digital transformation,” he adds.

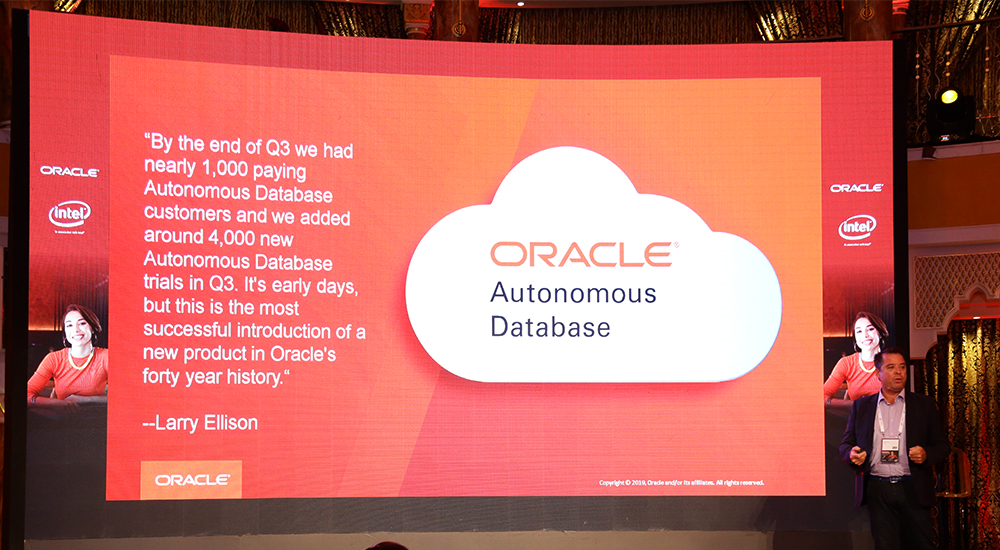

Oracle has been a database company for the last 40 plus years. For Oracle, the database is critical for managing data and all types of data. If you have a database that manages all sorts of data types, you can apply machine learning algorithms to extract answers from the data. “That is where the world and the future is going, because data is being managed by the database,” says Dunn.

Globally, artificial intelligence has been a buzzword for the last 20-30 years. But it is only in the last ten years that artificial intelligence has been implemented along with machine learning, which is a subset of artificial intelligence, by Oracle in its database product. “We see it as a fundamental part of the data and the database.”

And this is unlike some of its competitors, who see artificial intelligence or machine learning as an add-on in some of their vertical market applications, indicates Dunn.

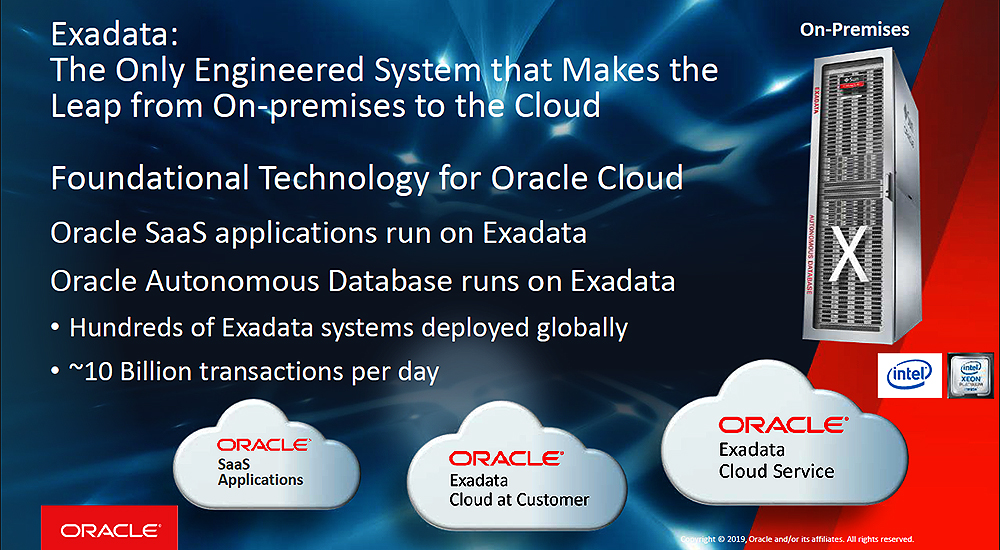

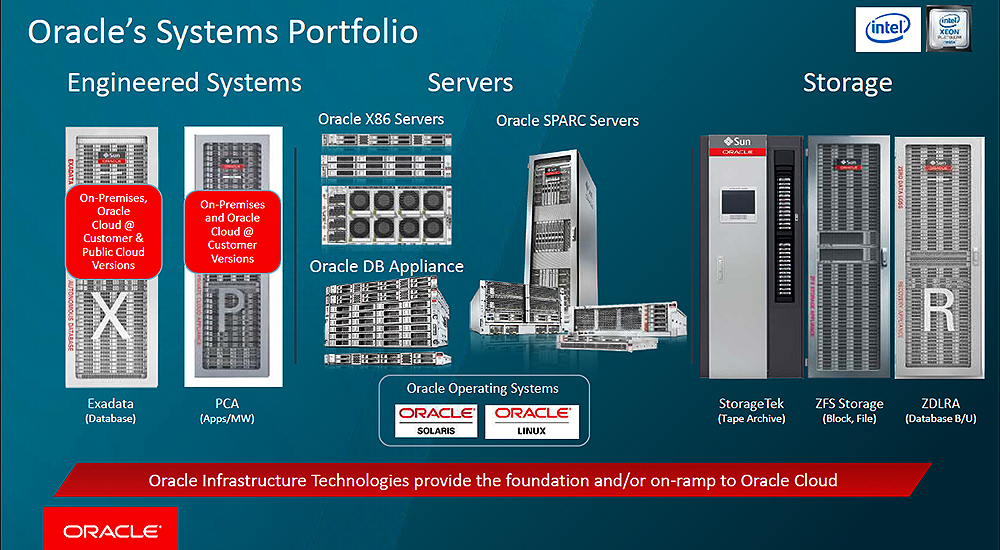

Oracle saw a long time ago, 15 plus years ago, that for it, to be successful in the marketplace owning the software and owning the database was not just enough. With the growth of data and storage associated with it, the requirement to run analytics and business intelligence, has required the availability of specialised hardware, not just commodity hardware.

Oracle’s database requires specialised hardware optimised for data workloads and especially the database. “All of Oracle’s hardware products are first and foremost optimised for the database. Even more importantly we have certain products like Exadata and SPARC that are optimised for Oracle database,” points out Dunn.

Global scale

According to Dunn, with 350,000 customers globally, almost half of the world’s data resides in Oracle databases. And that is also a big responsibility to secure and optimise. “If your data is not available, you cannot run your business and if you cannot run your business you are losing money.”

To provide the efficiencies of security and scalability, Oracle builds in encryption and data compression into the database. Oracle database is one of the few database environments where the data is encrypted from end to end. Oracle systems are designed to make sure that the entire flow of data from data at rest, and storage, all the way to analytics and machine learning, remains encrypted.

Since organisations continue to be penetrated and their data hijacked through ransomware and other malicious tools, it is quite clear that firewalls and other peripheral solutions are not working. “The only way to properly protect the database is to have it always encrypted,” stresses Dunn.

Another tool that Oracle uses to boost reliability of its database is a data compression tool called Hybrid Columnar Compression, HCC, which is a storage-related Oracle Database feature that causes the database to store the same column for a group of rows together. Storing column data together in this way can dramatically increase the storage savings achieved from compression.

Because database operations work transparently against compressed objects, no application changes are required. The HCC feature is available on database deployments created on Oracle Database Cloud Service using the Enterprise Edition.

Dunn explains the overall benefits of encryption and compression at the database. “Not only is the data encrypted but it is compressed. So, when you are transferring the data, or in storage, it stays in a compressed format until it needs to be analysed. Since you are transferring less data, you are able to transfer more data in the same amount of time.

“Once it hits the Exadata CPUs and the storage cells that are in Exadata it gets decompressed. You can then do the analytics run all the applications,” he adds.

Cloud at Customer

When customers develop from Oracle cloud resources, they usually have the choice of where to host and deploy. For customers in the region, where there is absence of Oracle Cloud in-country hosting, Oracle offers a solution called Cloud at Customer.

Says Dunn, “We have always made our systems available directly to customers. And where it is not available, we actually productised our systems that are in our cloud and delivered them to our customers in their datacentre.

Cloud at Customer, is the same cloud experience and the operational cost–based subscription model, hosted out of the customer’s datacentre. Oracle has been delivering Cloud at Customer for the last three to four years. While the solution is hosted on-premises the experience is as if it is in the cloud, with the same interface and utilisation. The only additional requirement is a contractual obligation.

So, whether the customer opts for a SaaS, PaaS, IaaS, Autonomous Database service from Oracle, they can get the same benefits as if they are subscribing it from the cloud directly. Since the solution is hosted on-premises, the data generated never leaves the customer’s datacentre on-premises.

Says Dunn, “This is a great advantage for customers that have a sovereignty and governance issue. The Cloud at Customer is managed entirely by Oracle. It just happens to be inside the customer’s firewall.”

Running AI and ML

In order to run, artificial intelligence type of applications, Dunn points out that the Oracle Exadata platform does not require the additional numerical processing from Graphic Processing Units or GPUs. Says Dunn, “GPU does not help the Oracle database at all. It does work very well with AI specific type environments that are outside of the database. And the problem is when it is outside of the database, you have got to duplicate the data, you have to move the data out of the database into this other environment.”

Duplicating data, especially Terabytes of it, is a time and resource consuming exercise. And that is the reason why Oracle does not adopt this approach and runs all routines within the database. Oracle’s self-running and self-optimising Autonomous Database is all about artificial intelligence and machine learning running within the database.

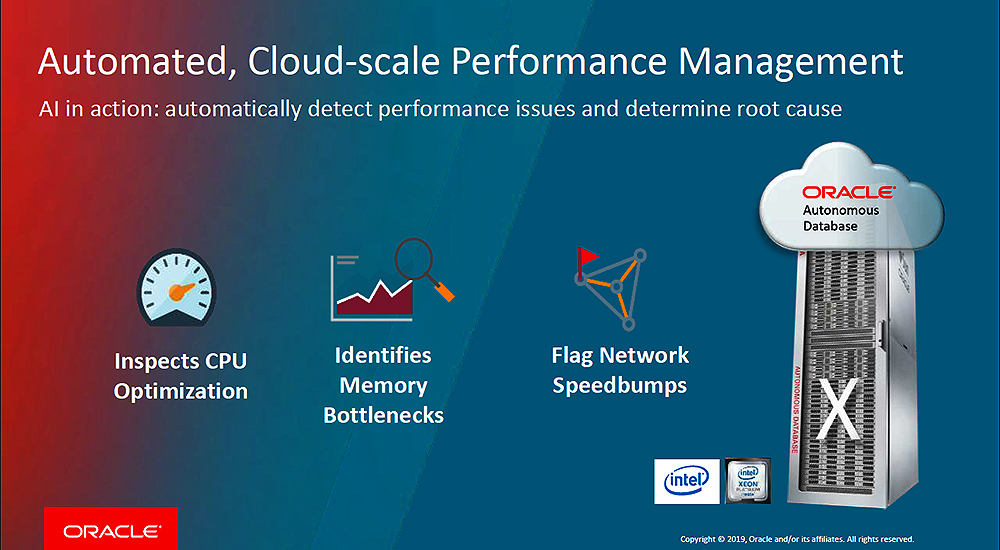

To support the Exadata platform, Oracle has developed 60 different unique technologies that it has developed over the last ten years. These are meant to enhance performance, scalability, security, manageability.

As an example, Dunn points out that flash-based storage cells within Exadata have dedicated CPUs attached to them. This offloads the storage management processing from the data processing and allows the CPUs to deliver much higher performance.

“We essentially have a lot more compute horsepower to do all of the artificial intelligence, machine learning type of functions. It is quite an intricate architecture of technologies that we have accumulated over the last few years.”

Microsoft and Oracle

While Oracle regards Microsoft as one of its biggest competitors, many of its customers do use the Microsoft platform as well, alongside the Oracle platform. “Most of our customers are running Microsoft. They are running Microsoft Office 365 but they are also running Oracle database and Oracle applications,” says Dunn.

However, the real problem according to Dunn is, you cannot properly run Oracle database on Azure cloud and you cannot properly run Office 365 on Oracle Cloud. Customers were having a difficult time figuring this out.

To solve these multi-cloud challenges, Microsoft and Oracle have interconnected their datacentres with high bandwidth, low latency, fat connectivity pipes. This optimized multi-cloud environment offers both Oracle and Microsoft end users, a single sign-on facility as well as common management tools.

Microsoft’s Active Directory is connected to Oracle’s single sign on technology, and Microsoft security is mapped to Oracle’s encrypted technology.

“We are allowing customers that want to run Oracle applications as well as Microsoft applications in the Azure Cloud, while leveraging Oracle Cloud, Oracle Exadata, Oracle Autonomous database which Microsoft does not have,” points out Dunn.

Oracle Exadata X8 and Oracle Database 19c

Oracle Database offers functionality for mission-critical workloads. In order to improve performance and ease-of-operation, Oracle has integrated the database with the Exadata platform for the past 10 years. The latest announcement of Exadata X8 includes the latest x86-based hardware optimised for Oracle workloads. This lowers latency, particularly IO and memory latency, important for database response time and throughput.

More importantly, Oracle is also integrating Exadata X8 and Oracle Database 19c with artificial intelligence and machine learning. As a result, this deepens the integrations and enables quicker development of real-time operational and functional improvements. More operational system data available to artificial intelligence and machine learning translates to quicker and more effective development of artificial intelligence and machine learning solutions.

Artificial intelligence needs sustainable access to as much operational system data as possible. Oracle is achieving this by making Exadata the platform for Oracle Cloud, Cloud at Customer and Cloud Applications. The sum of the pieces is far higher than the piece parts.

Open source practitioners have developed a flood of alternative databases recently. However, Wikibon concludes these databases support different workload types. These workload types are growing faster, but are not replacing the traditional systems of record workloads and databases.

There are alternative public cloud databases that can address the systems of record workloads. However, Wikibon concludes that they do not have the same functionality as Oracle Database. Wikibon also concludes that the IT cost of conversion, the disruption costs to the lines of businesses, and the risk of serious business disruption overwhelm any benefits. As a result of this analysis, Wikibon continues to strongly advise against any strategy that involves database conversion.

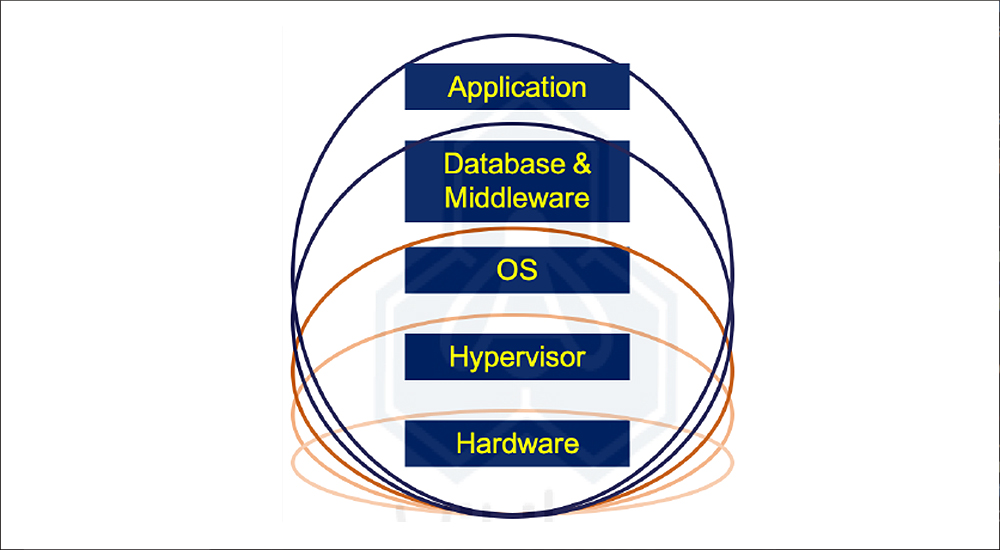

Wikibon has been discussing the benefits of converged infrastructure for the last decade. This source is also responsible for creating all service level agreements, including updates and security patches. And the same single source is responsible for all aspects of maintenance. This approach results in lower operational costs and reduced infrastructure. The vendor or integrator takes greater responsibility for delivering improvements and security as a continuous service.

However, the most important benefits found by practitioners is reducing the inherent friction from unique infrastructure, processes and procedures created by enterprise IT. Converged infrastructure enables faster deployment of new applications and application improvements. It is inherently more secure and easier to adapt to changing circumstances.

The success of public clouds comes from building a set of IT services on converged and hyperconverged infrastructure. They are now the foundation of what Wikibon calls true private clouds, and true hybrid clouds.

In order to take full advantage of converged and hyper-converged platforms such as Exadata X8, IT usually needs to change the organisation. Making these changes is often difficult, and results in friction to achieving all the benefits of converged and hyperconverged infrastructure.

Wikibon believes that Oracle will continue to push artificial intelligence inference code to the on-premises Exadata environments, because the compute has to be next to the data. The Autonomous Database Platform will be a combination of Oracle Database and Exadata. Wikibon believes that Oracle will need to move to a continuous improvement and deployment model and move to a cloud pay-per-drink financial model over time.

Exadata innovations

Wikibon believes that some of the most important Exadata improvements will come from artificial intelligence and machine learning. The foundation is a common platform and common components across all the Exadata instances. This allows data to be gathered across many different workloads and environments. These streams of data from public and private clouds can allow Oracle to develop sophisticated ways of improving data services and improve automation of operational procedures.

Of course, there needs to be an open and clear understanding of what data is collected and when. However, for the most part the objectives of Oracle and its customers are aligned, which is better performance, greater reliability, and greater compliance for mission -critical application databases. The number of Exadata volumes deployed is very important in establishing a critical mass of data streams from which machine learning can be useful. Improved inference code can then be developed and deployed to Exadata customers.

Some of the machine learning and inference code will generate general improvements across most or all environments. An example is a specialised cache fusion and RDMA algorithm for communicating transaction information. This is developed centrally, and the inference code enabled locally.

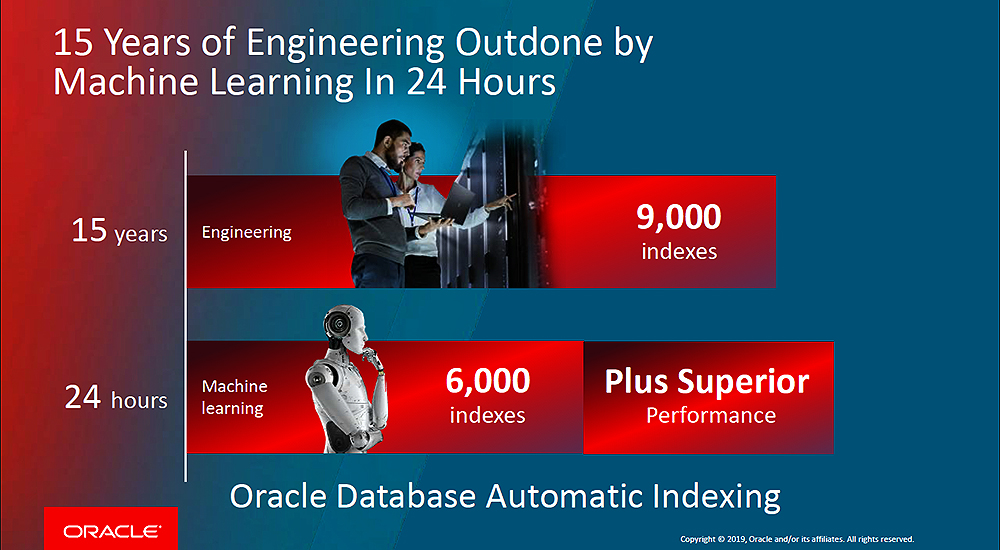

Other learning will be more specific to interactions between the database software and the infrastructure for a particular workload. An example might be tuning a specific important database with index creation. This require deep real-time interaction between Oracle Database 19c and the Exadata X8 components and iterate using reinforcement learning to an optimum state.

Oracle has moved aggressively to support mission-critical enterprise database-as-a-service across hybrid cloud environments. Real-time artificial intelligence and machine learning are key to improving the converged Exadata X8 platform. Wikibon believes Oracle can establish trust with its enterprise customers and establish a sustainable and unique volume of data to drive its artificial intelligence strategy. This will increase the amount of database code that will only run on the Exadata platform.

An alternative strategy for enterprises is to convert existing Oracle mission-critical applications to new cloud databases. Wikibon believes this Lift and Shift strategy is almost always wrong for this type of workload. Enterprises have not adopted this strategy. Conversion costs are very high. Application developers must freeze existing applications, and the business cost of freezing is even higher. The potential functionality limitations of those cloud databases once they are moved to a generic cloud environment usually impacts enterprise productivity.

Wikibon has worked with many clients evaluating conversion projects. These clients have consistently underestimated the business risk of conversion projects. This does not mean that enterprises should not convert any Oracle databases to a cloud database. Developers often deploy the wrong technology, or requirements change. Developers should deploy other specialised cloud databases. Wikibon strongly believes in horses for courses.

Wikibon also believes that Oracle should move to a cloud consumption model and move its software licensing model to a cloud-first continuous improvement model. Wikibon’s primary recommendation is that Oracle Exadata X8 and Autonomous Database should be the default platform for large-scale mission-critical traditional database workloads.

Senior executives should regard Exadata X8 and beyond as the default platform for Oracle. It is time for database practitioners and their executive management to let go of traditional roles of database design and optimisation of hardware and software. Acceptance of Oracle Cloud services for the appropriate mission-critical workloads will release headcount and reduce business risk. Practitioners will need new skills to design hybrid databases and support hybrid applications.

One useful classification of applications and workloads is as either deterministic or probabilistic. With most traditional computing workloads, if you have the same input and same code, you expect to generate exactly the same output. We can classify this as a deterministic workload. Examples of this are systems of record, such as finance, ERP, payroll, stock control, etc. Most compliance models assume deterministic outcomes.

There is also a broad set of problems that can be solved more efficiently with probabilistic methods, especially when the applications are driven by large amounts of data and compute requirements. In order to meet the constraints of elapsed time to solution, systems can dynamically choose algorithms designed for good enough output, which can be computed quicker.

Examples of probabilistic systems could include real-time price updates or deciding the price to bid for delivering an advertisement in real-time to an end-user. Speed to solution is more important than absolute accuracy and repeatability of the assessment. Hybrid applications will often be a combination of deterministic and probabilistic.